The ink on a federal contract rarely carries the weight of a white flag. But when Sam Altman, the architect of OpenAI, announced a formal agreement with the United States government this week, the atmosphere in Silicon Valley shifted from frantic innovation to a heavy, calculated stillness. It was the sound of a door locking.

For months, the tension between the titans of artificial intelligence and the iron halls of Washington has been a low-frequency hum. Now, it is a roar. The deal—a partnership centered on safety testing and federal oversight—comes at a moment of profound political volatility. While the headlines focus on the logistics of "compliance" and "frameworks," the real story is about the frantic scramble for a chair before the music stops.

Consider the room where these decisions happen. It is not filled with glowing holographic interfaces or lines of cascading green code. It is filled with mahogany tables, lukewarm coffee, and the terrifying realization that the math has outpaced the law.

The Ghost of the Trump-Anthropic Fallout

To understand why Altman walked into this particular room, we have to look at the wreckage left behind by his rivals. Just days ago, the relationship between the Trump administration and Anthropic—OpenAI’s fiercest ideological competitor—veered into a ditch.

Political friction is rarely about the technology itself. It is about control. When Anthropic’s ties with the current administration frayed, it created a vacuum. In the high-stakes game of Silicon Valley, a vacuum is either a death trap or an invitation. Altman, ever the pragmatist, saw the invitation.

He chose to lean in while others were pushed out.

The agreement establishes a direct pipeline between OpenAI’s most secretive labs and the U.S. AI Safety Institute. It means the government gets a look under the hood before the engine starts. For a company that once prided itself on being a nimble, independent research lab, this is a radical metamorphosis. It is the moment the disruptor becomes the ward of the state.

The Invisible Stakes of the "Safety" Narrative

We often talk about AI safety as if it’s a matter of preventing a robot uprising. The reality is far more mundane and, perhaps, more chilling. Safety, in the eyes of a government, is synonymous with stability.

A hypothetical researcher—let’s call her Sarah—spends her nights at a terminal in San Francisco. She isn't trying to build a digital god. She is trying to ensure that a massive language model doesn't accidentally teach a user how to bypass the security protocols of a regional power grid. This is the "frontier" the government is worried about.

When OpenAI agrees to let federal eyes monitor these tests, they aren't just checking for bugs. They are negotiating the boundaries of digital sovereignty. If the U.S. government holds the keys to the safety checks, they effectively hold a kill switch.

Altman knows this. He also knows that in a world where the Trump administration is increasingly skeptical of "big tech" but obsessed with national dominance, being the "safe" partner is the only way to remain the "dominant" partner.

Why the Anthropic Fallout Changed the Map

The collapse of the Anthropic dialogue wasn't just a PR blunder. It was a warning shot. For years, AI labs have operated on the assumption that they were too important to be regulated into submission. They believed their "compute" was a form of diplomatic immunity.

They were wrong.

The fallout showed that political alignment matters as much as algorithmic efficiency. By positioning OpenAI as the cooperative child in the room, Altman is attempting to insulate his company from the populist firestorms that have scorched his peers. It is a defensive crouch disguised as a leadership move.

The numbers back up the desperation. OpenAI is burning through billions of dollars in hardware costs and talent acquisition. To sustain that burn, they need more than just venture capital; they need the structural blessing of the most powerful economy on Earth. You cannot build a global infrastructure on a foundation of regulatory uncertainty.

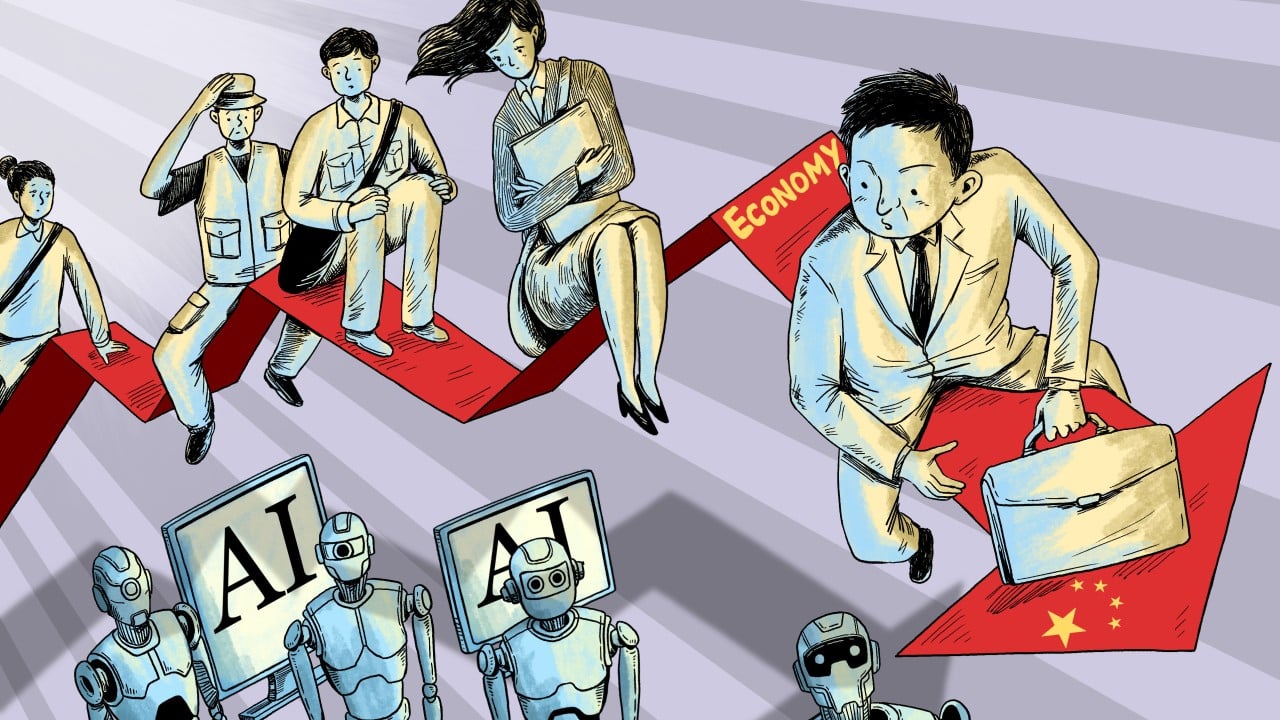

The Human Cost of the Digital Shield

Behind every line of this new agreement is a person who is afraid of being left behind. There is the mid-western factory worker wondering if this "safe" AI will eventually "safely" replace his job. There is the federal regulator who barely understands how a neural network functions but knows he will be blamed if something goes wrong.

Altman’s deal is a promise to these people. It is a psychological shield. By inviting the government in, he is sharing the burden of the "what if."

If OpenAI releases a model that causes a market flash-crash or a deepfake crisis, they can now point to the federal seal on the box. "We followed the protocol," they will say. "The government saw what we saw."

Responsibility, once a heavy weight on the shoulders of a few founders, is being redistributed. It is being diluted.

The Illusion of Transparency

We must be careful not to mistake access for understanding.

Allowing a government agency to "test" a model that contains trillions of parameters is like asking a librarian to vet the entire Library of Alexandria in a weekend. The sheer scale of the technology makes traditional oversight an illusion.

Imagine a map of a city that is as large as the city itself. It is useless. That is the challenge facing the U.S. AI Safety Institute. They are being given the map, but they don't yet have the eyes to read it.

Altman isn't just giving them data; he’s giving them the feeling of control. It is a masterclass in corporate diplomacy. He is trading a slice of his autonomy for a lifetime of legitimacy.

The New Architecture of Power

This deal signals the end of the "Move Fast and Break Things" era for artificial intelligence. We are entering the era of "Move Slow and Sign Everything."

The fallout with Anthropic proved that the "wild west" of AI is being fenced in. The fences are being built by the government, but the blueprints are being provided by the very companies they are meant to restrain. It is a symbiotic relationship that feels more like a marriage of necessity than a partnership of shared values.

Consider what happens next: other labs will be forced to follow suit or risk being branded as "rogue." The "Open" in OpenAI has long been a subject of debate, but this deal adds a new layer of irony. The models are becoming more closed to the public and more open to the state.

This isn't just a business headline. It is a fundamental shift in how power is brokered in the 21st century. We are watching the birth of a Digital Industrial Complex.

The stakes aren't just about who wins the AI race. They are about who decides where the finish line is. For now, Sam Altman has ensured that he is the one holding the tape, even if he has to let the government hold his hand while he does it.

The mahogany table is crowded. The coffee is cold. And the world is waiting to see what happens when the "safe" machine finally wakes up.